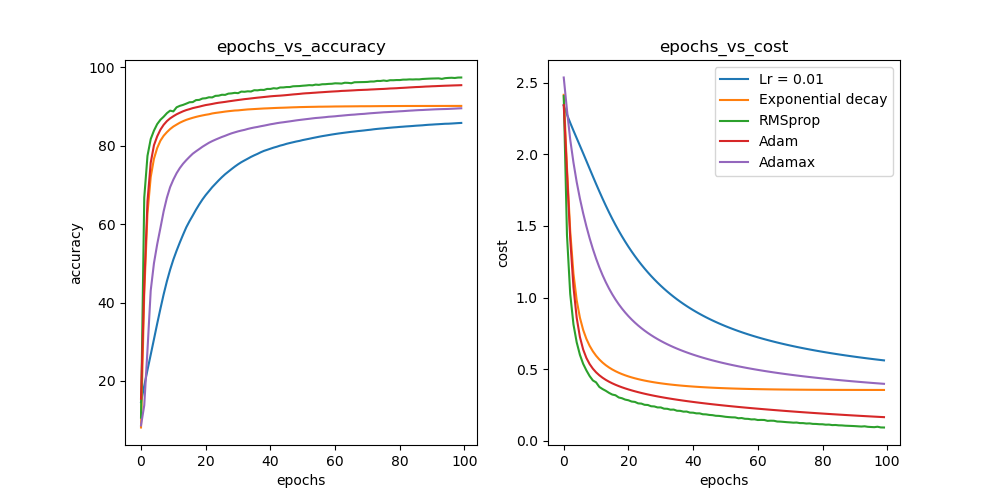

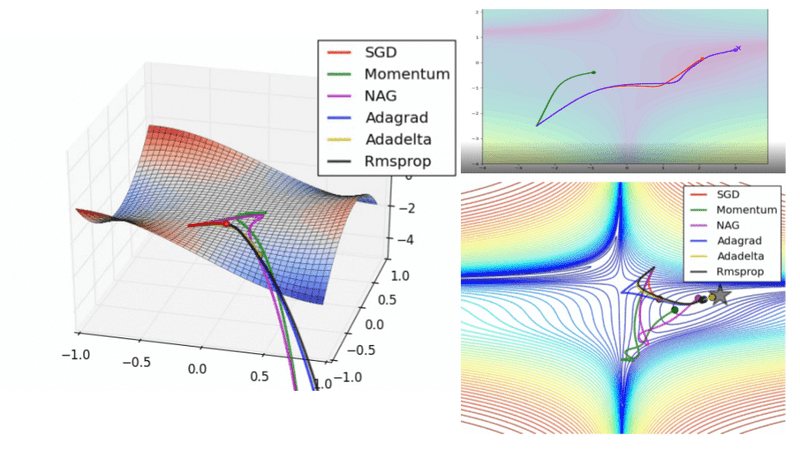

10 Stochastic Gradient Descent Optimisation Algorithms + Cheatsheet | by Raimi Karim | Towards Data Science

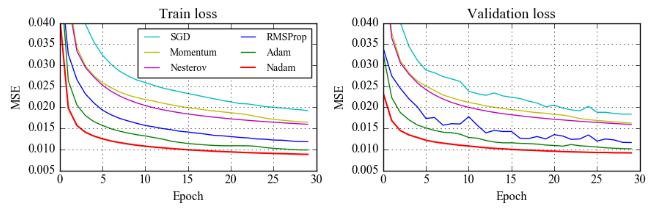

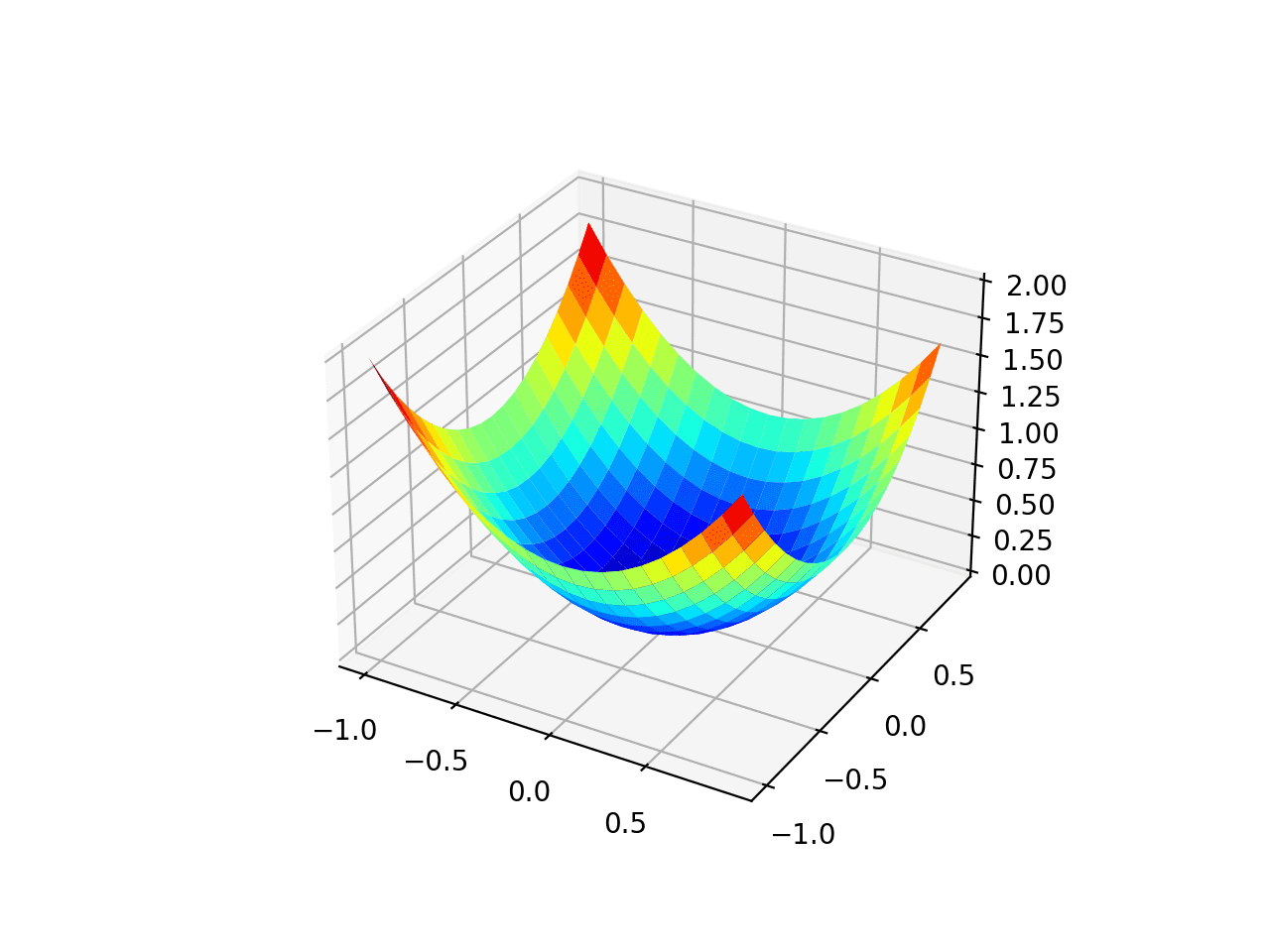

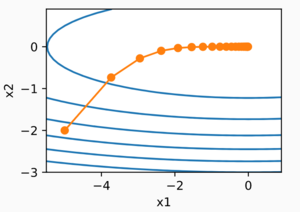

CONVERGENCE GUARANTEES FOR RMSPROP AND ADAM IN NON-CONVEX OPTIMIZATION AND AN EM- PIRICAL COMPARISON TO NESTEROV ACCELERATION

![PDF] Convergence Guarantees for RMSProp and ADAM in Non-Convex Optimization and an Empirical Comparison to Nesterov Acceleration | Semantic Scholar PDF] Convergence Guarantees for RMSProp and ADAM in Non-Convex Optimization and an Empirical Comparison to Nesterov Acceleration | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/f0a8159948d0b5d5035980c97b88038d444a1454/9-Figure3-1.png)

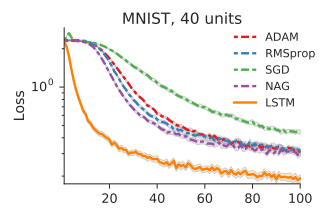

PDF] Convergence Guarantees for RMSProp and ADAM in Non-Convex Optimization and an Empirical Comparison to Nesterov Acceleration | Semantic Scholar

CONVERGENCE GUARANTEES FOR RMSPROP AND ADAM IN NON-CONVEX OPTIMIZATION AND AN EM- PIRICAL COMPARISON TO NESTEROV ACCELERATION

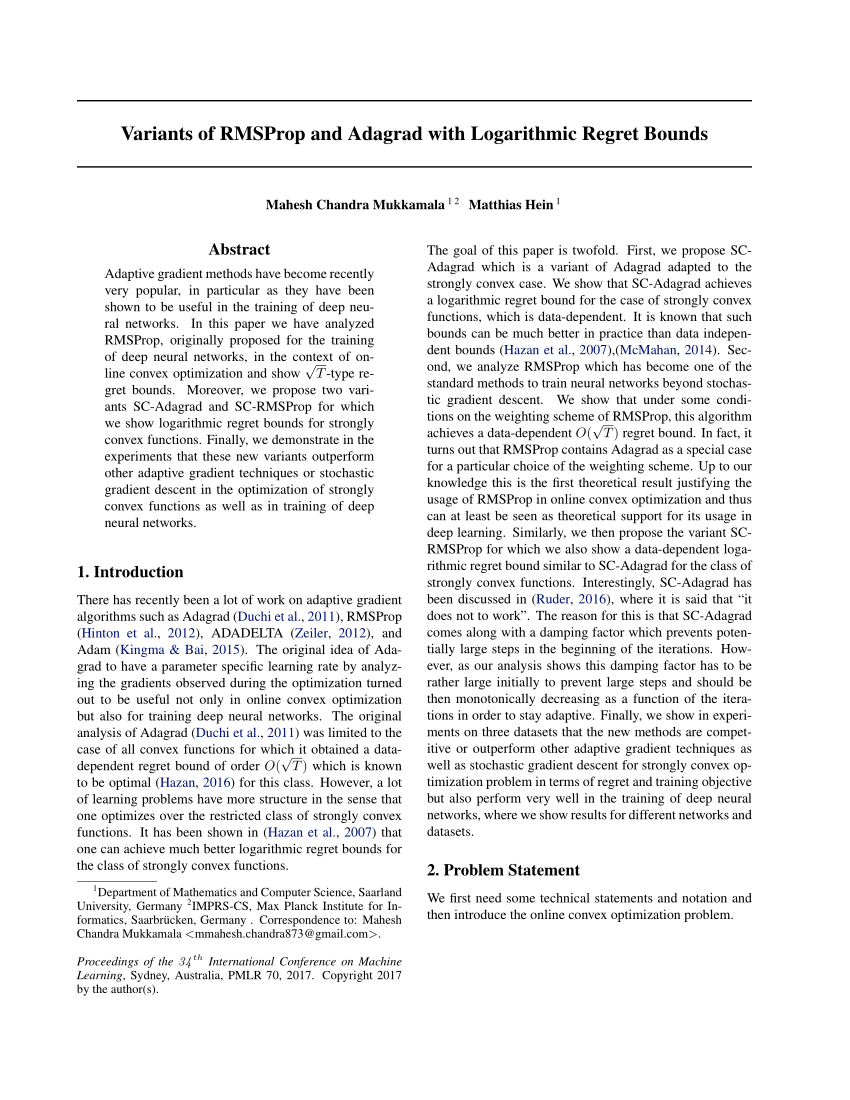

![PDF] A Sufficient Condition for Convergences of Adam and RMSProp | Semantic Scholar PDF] A Sufficient Condition for Convergences of Adam and RMSProp | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/27f197e0401b854d14a41829e09209e38fe920b6/8-Figure3-1.png)